It’s been one whole year since we publicly announced GoodAI. I want to celebrate the anniversary by looking back at what we have achieved in the past 12 months, and tell you a bit more about what we are planning for the future.

Our progress to date and next steps include:

- Framework

- Roadmap

- School for AI

- Growing Topology Architecture

- Arnold Simulator

- AI Roadmap Institute

- GoodAI Consulting

GoodAI started as a project within Keen Software House in January 2014. It was announced to the public on July 7, 2015, and has now grown to a team of 30 researchers. Together with Keen Software House, we have team members from 17 countries!

GoodAI’s mission is to develop general artificial intelligence

– as fast as possible –

to help humanity and understand the universe.

One year ago, our primary approach to building general AI was through Brain Simulator, our in-house collaborative platform that third-party researchers, developers, and tech companies could use to prototype and simulate artificial brain architectures, share knowledge, and exchange feedback. At that time, we were exploring various approaches to building general AI, trying to gain a better understanding of the field as a whole, and consolidating our own specific approach to the problem.

One year later, we’re working on several things that together form our focused approach to building general artificial intelligence.

We have focused mainly on our R&D roadmap. Together with our framework, it is our latest achievement. The roadmap started out almost as a side project, but the importance of a strategic overview of the AI landscape quickly became apparent. It will help us choose research directions more efficiently and reduce the complexity of development within those directions.

I feel that we have accomplished a lot during last year.

I am very satisfied with our progress.

Framework

We view intelligence as a tool for searching for solutions to problems. The guiding principles of our AI research revolve around an agent which can accumulate skills gradually and in a self-improving manner (where each new skill can be reused and improved in the accumulation of further skills).

Each new skill works like a heuristic that helps to guide and narrow the search for problem solutions. Some heuristics even increase the efficiency of the search for additional heuristics.

These principles have inspired our framework document, which describes how we understand intelligence and provides tools for studying, measuring, and testing various skills and abilities.

The framework itself will aim to be as implementation agnostic as possible, without regard to particular learning methods or environments. It will provide an analytic, systematic, and scalable way to generate hypotheses that are possibly relevant in the search of general AI.

R&D roadmap

The research roadmap is an ordered list of skills / abilities (research milestones) which our AI will need to be able to acquire in order to achieve human level intelligence. Each skill or ability represents an open research problem and these problems can be distributed among different research groups, either internally at GoodAI, or among external researchers and hobbyists.

There are two parts to the roadmap:

- a map for the open problems

- a map for known and proposed solutions (where each problem may have multiple or branching solutions)

The roadmap is a living document which will be updated as we work towards the milestones and evaluate them within the framework document.

The current version of the documents is early-stage and a work in progress. We anticipate that more milestones and research directions will be added to the roadmap as our understanding matures.

The first version of the roadmap and framework will be released to the public within the next couple of months. There will still be many parts missing, but we feel that it is better to engage with the community as soon as possible.

School for AI

What is the goal of the School for AI? We expect the AI to be able to learn. Of course, some intrinsic skills will be hard-coded, and the AI has to be “born” with them. Other skills will be learned. We will teach the AI these skills in a gradual and guided way in our School for AI which we are now developing.

In the School for AI, we first design an optimized set of learning tasks, or as we say, a “curriculum.” The curriculum teaches the AI useful skills / heuristics, so it doesn’t have to discover them on its own. Without a curriculum, the AI would waste time exploring areas that evolution and society already explored, or those that we know are not useful or perhaps dangerous.

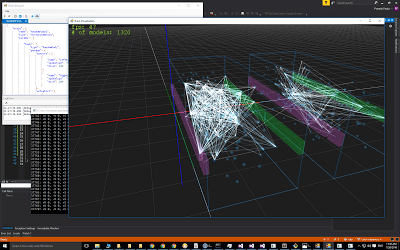

Arnold Simulator

Arnold Simulator is a software platform designed for the rapid prototyping of AI systems with highly dynamic neural network topologies. The software will provide tools for our research and development, but it is also designed for high performance and it’s transparently scalable to large computer clusters.

It is the next generation of GoodAI in-house prototyping software. It follows in the steps of GoodAI’s Brain Simulator, which focused more on the standard machine learning algorithms. We’re designing it for large, highly dynamic, heterogeneous and heterarchical networks of lightweight actors, and with focus on concurrency, parallelism and low-latency messaging. For concurrency, we’re using the actor model, where independent actors communicate via messages. The simulation runs in discrete time-steps, during which the individual actors are processed in parallel. In between the simulation steps, the system can interact with any virtual or real environment via sensors and actuators. The design of Arnold Simulator will allow us to effectively implement the growing general AI architectures we are focusing on.

Working groups

The GoodAI team is organized into four smaller working groups: the Brain group, School group, Software Engineers, and our AI Safety team.

- Brain group is working on the implementation of solutions to research topics, mostly focusing on growing topologies, modular networks, and the reuse of skills. These are the guys who are implementing hardcoded skills.

- School group is studying the skills that the AI needs to acquire (hardcoded or learned), and designing learning tasks for efficient education. They’re also working on the R&D roadmap and mapping various curricula. These are the guys who will train the learned skills.

- Software engineers are building our Arnold Simulator.

- AI Safety team is studying the safe path forward with our technology, how to mitigate threats to our team and humankind as a whole, creating an alliance of AI researchers committed to the safe development of AI and general AI, developing our futuristic roadmap, and more.

Futuristic roadmap

While our R&D roadmap covers research and technology plans, our futuristic roadmap is focused on freedom, society, ethics, the universe, people, the Earth, politics, the economy, and more. Its contents include a description of the long-term future we want to build, and a step by step description of how we want to get there while mitigating the risks and challenges we might face along the way.

Our AI Safety team is working on this.

AI Roadmap Institute

We’re also entertaining the idea of setting up an independent institute dedicated exclusively to the study of (general) AI roadmaps – focused only on the big picture and agnostic to implementation details, plus promoting the importance of the big picture and long-term planning, detailed roadmaps, and perhaps shifting the focus/attention of the AI community toward this big picture direction.

The AI Roadmap Institute is a new initiative to collate and study various AI and general AI roadmaps proposed by those working in the field. It will map the space of AI skills and abilities (research topics, open problems, and proposed solutions). The institute will use architecture-agnostic common terminology provided by the framework to compare the roadmaps, allowing research groups with different internal terminologies to communicate effectively.

The amount of research into AI has exploded over the last few years, with many papers appearing daily. The institute’s major output will be consolidating this research into an (ideally single) visual summary which outlines the similarities and differences among roadmaps, where roadmaps branch and converge, stages of roadmaps which need to be addressed by new research, and where there are examples of skills and testable milestones. This summary will be constantly updated and available for all who are interested, regardless of technical expertise.

There are currently two categories of roadmaps: research and development, or how to get us to general AI, and Safety/Futuristic – which explore how to keep humanity safe and the years after general AI is reached. These roadmaps will be described by the institute using the framework in an implementation agnostic manner. The roadmaps will show the problems, and any proposed solutions and the implementations of others will be mapped out in a similar manner.

The institute is concerned with ‘big picture’ thinking, without focusing on local problems in the search for general AI. With a point of comparison among different roadmaps and with links to relevant research, the institute can highlight aspects of AI development where solutions exist or are needed. This means that other research groups can take inspiration or suggest new milestones for the roadmaps.

Finally, the institute is for the scientific community and everyone will be invited to contribute. It will phrase higher level concepts in an accessible and architecture-agnostic language, with more technical expressions made available to those who are interested.

Growing Topology Architecture

We are trying to implement the first prototypes of neural architectures that support the gradual accumulation of skills. This is the implementation side of our work, rather than the big picture / theoretical side of what we do.

The Framework – a systematic method for designing roadmaps and proposing solutions to various AI research topics – is helping us to generate useful hypotheses for which skills to implement first, research directions to take, and solutions. Essentially, if you view intelligence as a search problem, then you can see various AI skills and abilities as heuristics which increase the efficiency of the search.

For example, we have identified that the accumulation of skills is one of the first intrinsic heuristics we need to implement in order to allow AI to learn gradually and self-improve.

PR

Our PR plans include promoting the R&D roadmap, the framework, and the AI Roadmap Institute, all of which will help us find like-minded people and facilitate collaboration with academics and the general public

GoodAI Consulting

GoodAI Consulting is an AI-focused consulting firm using state-of-the-art artificial intelligence solutions to maximize business success for companies and organizations. GoodAI Consulting started because of high demand for the cutting-edge know-how of GoodAI researchers. Our world-class research team focuses solely on general AI research, and is building technology that won’t be on the market for another 5-10 years. Clients of GoodAI Consulting receive exclusive and preferential access to the GoodAI core team and research results.

GoodAI Consulting is hiring, so join us or sign up to learn more!

GoodAI web site: www.GoodAI.com

We’ve updated our website to reflect the most up-to-date description of our work, our progress, what we are doing, how we are doing it, why, and so on. If you’d like to learn more about our framework, roadmap, and other areas of focus, spend some time on our About page.

Our website is essentially a summarized knowledge base of GoodAI, but if there’s anything you can’t find there, just send us an email at info@goodai.com.

Contacts, friendship, and commitment to cooperation

We have built a substantial network of like-minded people, from academia to business, whom we reach out to when discussing and brainstorming ideas, problem and solutions. We are very fortunate to have them.

—

As a whole, in the past year we focused much more on the big picture – architecture, strategy, and roadmapping – more than on implementation or solving specific and narrow problems. In my view, the big picture for general AI is currently under-researched, and any gains we make in this direction can have a dramatic impact on lower level, more focused implementations.

Certain things in GoodAI are the same as they were one year ago, however. We’re still self-funded – I invested $10mil at the start of the project, have continuously added funding since then, and am prepared to increase my amount of personal funding in the future to ensure that we always have $10mil+ as a reserve going forward. We are also still completely devoted to our goal of developing general artificial intelligence.

We’re committed to the idea that solving a general problem will, in the end, offer better outcomes than trying to solve a set of specific problems – even if the narrower problems seem easier to tackle at first.

“There lies the inventor’s paradox, that it is often significantly easier to find a general solution than a more specific one, since the general solution may naturally have a simpler algorithm and cleaner design, and typically can take less time to solve in comparison with a particular problem.”

– Bruce Tate

We aim for general AI, not narrow AI use cases. This approach allows us to restrict the search for the right solution and focus more resources on our desired long term goal.

Thanks for reading!

Marek Rosa

CEO, CTO, and Founder of GoodAI

CEO and Founder of Keen Software House

🙂

Follow us on social media:

Facebook: https://www.facebook.com/GoodArtificialIntelligence

Twitter: @GoodAIdev

www.GoodAI.com

www.KeenSWH.com

Awesome, I am a big fan and am sure that you will get there.

I was last commenting here on June 14, 2015 and now I am glad to see all your progress over the past year. Today I have been coding general artificial intelligence by porting the Perl artificial intelligence back into Forth.